The AI Panic Trade

Distinguishing the genuinely impaired from the merely exposed.

Being contrarian is easy.

Being contrarian and right is where the money is.

Last Friday, April 10, we made a new recommendation inside VMF’s Strategic Asset Allocation. It is already up more than 15%. But this is not a victory lap. It is something more useful than that.

It is a case study in what happens when the market panics first and thinks later.

What follows is an excerpt from April’s issue of VMF’s Strategic Asset Allocation, titled “The Great AI Washout.” In that issue, we took the other side of one of the market’s laziest new trades: the idea that if AI is getting smarter, then anything that smells like software must be in trouble.

That is how crowds lose money.

They take a real threat, flatten every distinction, and sell the whole complex as if nuance were a luxury they can no longer afford.

We do not see it that way.

We think AI is real. We think the disruption is real. And precisely because we take it seriously, we also think the market’s first reaction has been too blunt. Some businesses deserve to be punished. Others are being thrown overboard with them. That is where the asymmetry lives.

That is what this excerpt is about.

Not denying the panic. Exploiting it.

The specific recommendation, and the broader actionable positioning around it, remain behind the paywall. But the thinking that produced it begins here.

Below is “The AI Panic Trade.”

To understand why fear has ripped through so many corners of the market, you have to stop thinking about AI as a better chatbot.

That framing is already obsolete.

What changed is not merely that the models got smarter. It is that they started to feel less like tools... and more like coworkers. Digital ones. Tireless ones. Ones that do not just answer questions, but begin to do the work. That is the real psychological break the market is trying to process right now. And once investors began to grasp it, the repricing in software and adjacent fields stopped looking like a routine drawdown and started to resemble something closer to a stampede.

Anthropic’s latest releases helped crystallize that fear by pushing the narrative from

“AI is coming” toward something more immediate: AI agents1 that can organize files, send emails, create spreadsheets, analyze reports, review legal documents, patch security issues, and even help generate software itself.

Pause there for a second.

Imagine the scene from the perspective of a corporate decision-maker. You are not being pitched a futuristic science project...

You are being shown a system that can read the contract, summarize the risk, build the model, clean the dataset, write the first draft, flag the anomaly, and maybe even generate the code needed to automate the next step. Not perfectly. Not autonomously in every case. But well enough to make one uncomfortable thought impossible to ignore: how many layers of paid software, outsourced services, and junior knowledge work are really as indispensable as they looked six months ago?

That is where the fear comes from. It is not just about coding. It is about the sudden sensation that a wide range of cognitive workflows may be getting compressed into one accelerating layer.

And that is exactly why the reaction spread beyond software developers.

In law, the fear is obvious. If a model can rapidly review documents, compare clauses, flag inconsistencies, and summarize implications, then the market immediately starts asking hard questions about the value chain around routine legal work.

In finance, the same dynamic appears in a different costume. If a model can ingest a messy dataset, organize it, surface the drivers, build a clean spreadsheet, and draft an initial analytical output in minutes, then data services, workflow tools, and some parts of the research stack suddenly look a lot more vulnerable than they did before.

In cybersecurity, the fear takes yet another form: if models can increasingly identify and patch vulnerabilities, then parts of the market begin to worry that the old labor-intensive security model could also face pressure.

Anthropic’s product evolution helped bring all of those fears into view at once.

That is why we wrote in February that obsolescence is no longer a theory... it is a timeline. When capability jumps, business models do not get repriced gradually.

They get repriced suddenly. The market does not wait around for ten quarters of audited evidence. It front-runs the possibility. It imagines the end-state, panics, and starts liquidating anything that looks even remotely exposed. That is what dispersion looks like in a true acceleration regime: one cluster of companies gets treated as the new infrastructure of the future, while another gets shoved toward the penalty box before the jury has even met.

And let’s be honest: the fear is not irrational

If you were running a software company built on charging customers for repetitive workflow, light analysis, templated documentation, or narrow productivity features, would you really sleep well after watching the frontier models evolve this quickly?

Probably not.

The market is right to be alarmed. It is right to understand that a new technological layer is arriving with the power to collapse feature sets, compress pricing, and pull more value into the hands of the platforms that own the intelligence itself. We are comfortable saying that plainly because we have not been spectators to this disruption.

In our other Tiers, we have been building exposure to one of the grand catalysts behind it. We know this wave is real. We know the threat is real. And we know it reaches far beyond coding.

But that is only half the story. Because when markets get a credible glimpse of the future, they rarely price it with surgical precision. They price it with a flamethrower.

That is what happened here.

The first reaction was not to separate fragile software from mission-critical software... not to distinguish the feature from the platform... not to ask which products are sticky, embedded, and deeply woven into real corporate workflows.

The first reaction was much cruder. If it smelled like software, if it touched data, if it lived anywhere near the cognitive assembly line, it got marked down. That is how you end up with a market that may be directionally right about disruption, but wildly imprecise about who actually gets disrupted first.

And that distinction is everything because the market began to act as if any new plug-in could suddenly take over everything from data analysis to tax planning to legal services.

So yes... the fear is understandable.

You can see it. You can feel it. In fact, it is easy to picture why it became so visceral. A world in which an AI agent sits beside every worker, drafts before you draft, analyzes before you analyze, and automates before you can bill the hour is a world in which many equity stories deserve to be re-examined from the ground up.

The market is not hallucinating that threat. It is staring directly at it.

But markets do have a habit of moving from insight to exaggeration with astonishing speed.

And that is where our interest begins.

Once a market stops distinguishing between the truly vulnerable and the merely exposed, dislocation takes on a different character. The question is no longer whether disruption is real. It clearly is. The question is whether, in its rush to price the future, the market has begun to punish some high-quality businesses as if the verdict were already final.

That is the opportunity we want to explore. Because outside the demo reel, the real economy is much messier than the panic implies.

Enterprise software is not a weekend side project.

It is not a browser plug-in.

And it is certainly not just a shiny interface sitting on top of generic tasks. In the real world, these systems are stitched into the guts of organizations.

They are wired into approvals, permissions, compliance layers, customer records, reporting structures, accounting logic, communications history, and years of operational muscle memory.

Replacing them is not like swapping one app for another. It is more like replacing the plumbing in a skyscraper while the building remains occupied.

You see... the switching costs are high because the embeddedness is high. And the embeddedness is high because the workflow itself has become the product.

Then comes the data problem... which is really a moat problem in technical form.

Have you check Our latest leaderboard? It tells the story better than any intro, showing our total returns since publication, including multiple triple-digit winners and a consistent record of beating the market while managing risk.

A frontier model may be brilliant. But brilliance alone does not grant it immediate access to a company’s proprietary records, internal processes, client history, negotiation patterns, billing architecture, security protocols, or institutional memory.

And even when access is granted, someone still has to decide how the model interacts with those assets, where the guardrails sit, who is accountable when errors occur, and how the outputs get folded back into a living business process.

That is why the software layer is not merely “software.” In many cases, it is a system of record, a system of control, or a system of workflow orchestration. And those systems are far harder to rip out than the market’s first reaction tends to assume.

This is also where our own prior work becomes relevant.

In February, we argued that a productivity-led growth boom may be unfolding in real time... that AI is not a one-factor story, but part of a broader stack in which software gets cheaper to build and deploy, work gets automated, labor economics begin to shift, and output can rise without the usual inflationary heat.

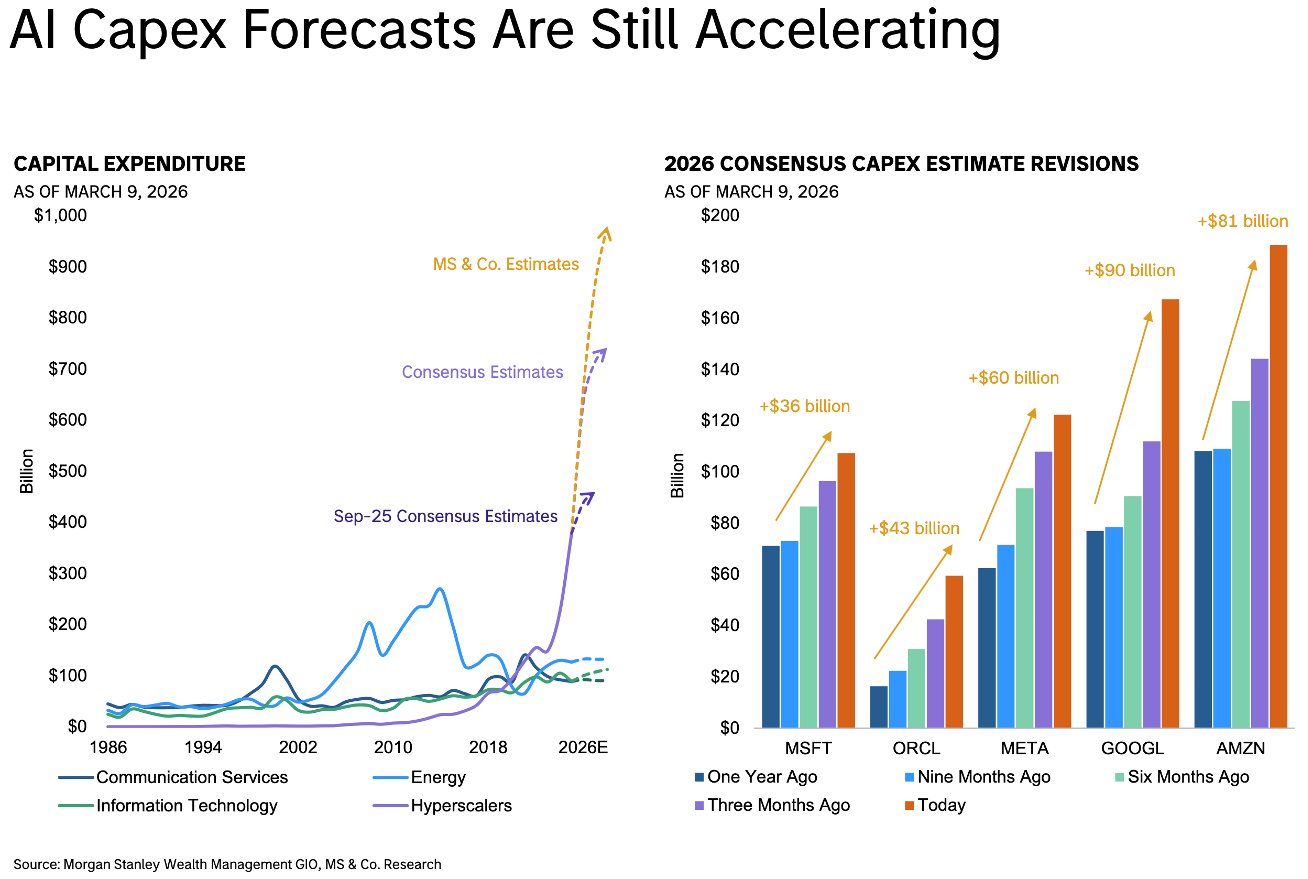

We also highlighted the simplest tell of all: hyperscaler capex.2

This is not the spending pattern of an economy preparing for technological stagnation. It is the footprint of an industrial-scale rebuild of the digital economy.

And if that thesis is even roughly right, then the second-order implication is crucial: lower software-production costs do not necessarily mean less software value. In many cases, they can mean more experimentation, more deployment, more automation, more throughput, and more economic activity to coordinate.

Productivity shocks do not simply eliminate tasks... they often expand the system around them. That is exactly why we wrote that stronger activity can arrive with disinflationary pressure when productivity is doing the heavy lifting. Higher output per hour, lower unit costs, faster throughput... that combination is not a recipe for economic shrinkage. It is a recipe for a bigger pie and a fiercer scramble over who gets to own the toll roads around it.

That is one reason Marc Andreessen recent intervention is so interesting . His point, stripped to its essence, is that the straight-line “AI destroys jobs, therefore demand collapses” narrative is far too simplistic.

In his view, AI can restore a more historically normal rate of productivity progress, expand output, compress prices, and ultimately create more opportunity rather than less.

You do not have to buy every ounce of Silicon Valley optimism to recognize the deeper logic: when productive capacity rises sharply, new categories emerge, old bottlenecks loosen, and the demand for coordination often expands rather than contracts. In other words, the arrival of AI agents can be disruptive at the task level while still being expansionary at the system level.

And if that is even partially true, then the market is making a category mistake when it assumes that automation must be uniformly bearish for the companies sitting inside enterprise workflows.

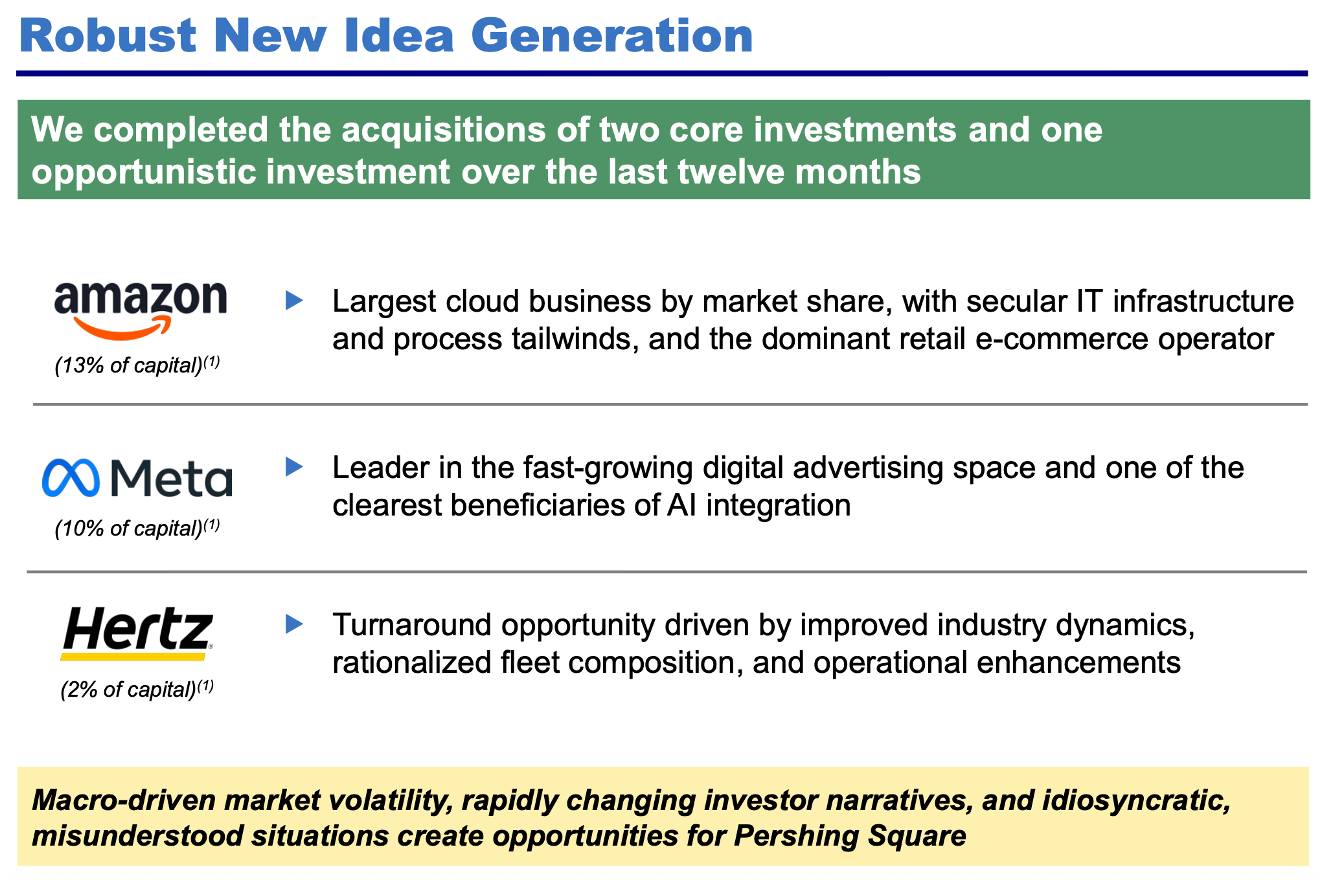

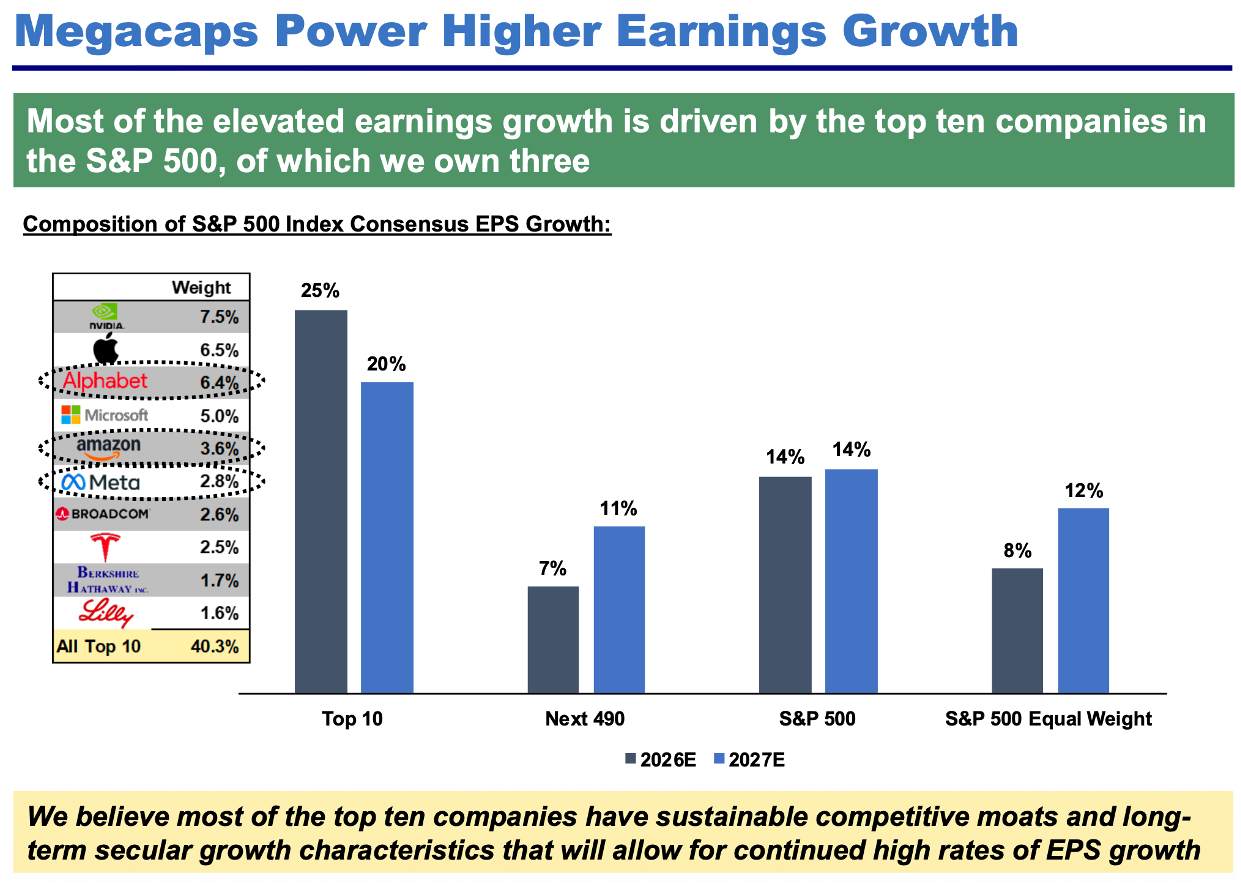

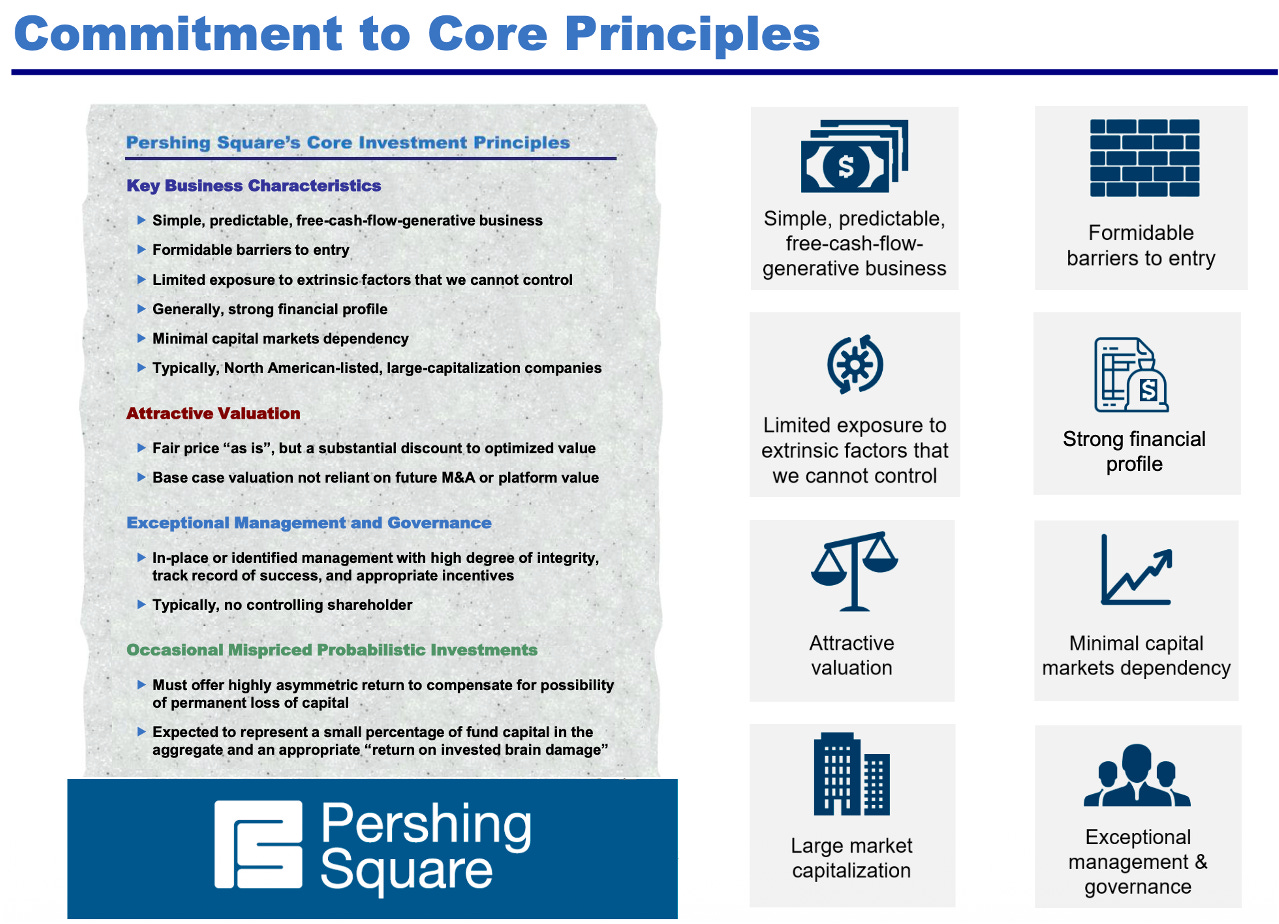

Bill Ackman’s recent work points in a strikingly similar direction, only from the vantage point of a stock picker rather than a techno-optimist. Pershing’s recent materials make the case that the market has been too quick to punish high-quality businesses over near-term fears tied to AI disruption or AI-related spending. On Meta, for example, Pershing argues that concerns over elevated spending miss AI’s potential to accelerate revenue growth and reinforce long-term competitive moats.

The chart above offers a simple representation of the investment process followed by Bill Ackman. At its core, it emphasizes identifying high quality, durable businesses, allowing for periods of market mispricing, and deploying capital when sentiment diverges from long-term fundamentals. It is a disciplined approach built on conviction, patience, and the ability to act decisively when opportunity arises.

More broadly, Pershing continues to define its ideal investment as a simple, predictable, free-cash-flow-generative business with formidable barriers to entry and a strong financial profile. That is not a framework built for owning fragile feature companies. It is a framework built for identifying businesses whose quality allows them to absorb disruption, monetize it, and come out the other side with stronger economics than the market initially believed possible.

Notice the parallel.

Our February issue argued that productivity and technological acceleration could support stronger economic activity than consensus expects. Andreessen is arguing, in his own far more provocative style, that the employment and output consequences of AI may skew much more positively than the current doom loop implies. Ackman, meanwhile, is acting on a related idea in markets: the crowd is so focused on the immediate fear that it is beginning to misprice the longer-term earnings power of certain high-quality businesses.

Different language. Different angle. But the rhyme is obvious.

Now, let’s be disciplined.

None of this means every software company is safe. Far from it. Some really are in trouble. Some products are little more than expensive wrappers around tasks that frontier models will increasingly commoditize. Some moats will prove imaginary. Some “platforms” will turn out to be features with good branding.

We are not denying that. We are insisting on something subtler... and more useful: the first phase of a disruption wave almost always punishes with a sledgehammer before it rewards with a scalpel.

That is why this moment deserves our attention. The market has become highly effective at pricing the fear... but much less precise in pricing the boundaries of that fear.

This chart already discloses the instrument we will be adding to the Model Portfolio to capture this opportunity. For those paying close attention, the positioning, sector exposure, and underlying components offer a clear indication of the direction we are about to take.

It has been quick to imagine what AI agents may replace. It has been less careful in asking which systems remain deeply embedded, which workflows still command proprietary data, which products sit too close to the customer’s operational bloodstream to be casually removed, and which companies may actually benefit as the volume of digital work explodes.

That is the distinction we care about.

Because if we are entering a world of faster output, cheaper software creation, and broader workflow automation, then the winners will not all be the model makers themselves. Some will be the incumbent toll roads, the entrenched systems of record, the deeply embedded orchestration layers, and the quality businesses whose products remain too woven into real enterprise behavior to be wished away by a scary demo.

And that is precisely where the opportunity begins to take a more actionable form.

Because once you accept that the disruption is real, but that the market’s first attempt to price it has likely been too blunt, the next question becomes obvious: how do we express that view intelligently?

That is where we turn next.

You might also like reading:

AI Agents are software systems powered by advanced AI models that can execute multi-step tasks autonomously, rather than simply respond to prompts. Unlike traditional tools, AI agents can plan, act, iterate, and interact with digital environments, from writing code and analyzing data to managing workflows. Their rise marks a shift from assistive software to operational software, raising both productivity potential and concerns around workflow disruption.

Estimates for AI-related capital expenditure continue to trend higher, not lower. Across major hyperscalers (including Microsoft, Amazon, Alphabet, and Meta) consensus expectations for infrastructure spending have been repeatedly revised upward as the scale of the AI buildout becomes clearer. This reflects the enormous capital intensity required to support model training, inference, and data-center expansion. Far from peaking, AI capex is still in an acceleration phase, reinforcing the view that we are in the early stages of a multi-year investment cycle with significant implications for productivity, growth, and market leadership.