The Abundance Shock

When intelligence gets cheap, what becomes expensive?

Everyone is asking whether AI will be inflationary or deflationary.

We think that is the wrong question.

The better question is this: when intelligence gets cheap, what becomes expensive?

That distinction matters because artificial intelligence is beginning to compress the cost of a vast layer of cognitive work: drafting, coding, summarizing, researching, analyzing, organizing, and automating tasks that once required expensive human time.

In some areas, that will be profoundly deflationary. Certain services will become cheaper. Certain workflows will require fewer people. Certain software layers will become easier to build. And some business models that looked protected may prove far more fragile than investors assumed.

But cheaper intelligence does not mean a cheaper system.

AI still needs power, compute, data, trusted distribution, legal permission, regulatory clearance, and institutional adoption. And if it begins to displace large amounts of white-collar labor, the consequences will not be absorbed quietly. They will be politicized.

That is why the inflation-versus-deflation debate feels too crude. AI may be deflationary in what it replicates, inflationary in what it cannot replace, and politically explosive in how it distributes the gains.

In April’s issue of Alpha Tier, we argued that the old Debasement Trade is not dead. It is evolving. Scarcity is not disappearing. It is moving.

And once scarcity moves, capital must move with it.

Here is “The Abundance Shock.”

Let’s begin by granting the abundance camp its strongest point. Artificial intelligence is not merely improving existing workflows at the margin. It is beginning to compress the cost of cognition itself.

That is the real break with the recent past.

For years, investors were used to thinking about technological progress in fairly familiar (and linear) ways: better software, faster chips, improved interfaces, more data, more automation at the edges. Important, yes... but still incremental enough that the old economic categories remained mostly intact. Labor still looked scarce.

Skilled cognitive work still looked expensive. Certain kinds of analysis, drafting, coding, organizing, and decision support still required a meaningful amount of paid human time.

Now that assumption is starting to wobble.

Because what the latest generation of AI systems is making cheaper is not only software development or internet search or customer support in the narrow sense.

It is a much broader layer of economically useful mental activity. Summarization. Pattern recognition. First-draft writing. Spreadsheet construction. Data cleaning. Research assistance. Code generation. Document review. Workflow orchestration - All tasks that used to sit comfortably inside the wage bill are beginning to migrate toward the compute bill.

That matters enormously. Because once a capability moves from the wage bill to the compute bill, markets begin to reprice the world around it. Some companies start looking less like differentiated franchises and more like expensive wrappers around functions that are becoming cheaper by the month.

Certain white-collar workflows begin to look less labor-intensive than they did before. Some business models begin to lose the comforting illusion of scarcity that protected them until recently. And investors, sensing that shift, do what they always do when the future arrives faster than expected:

They overreact.

That is exactly what we argued in this month’s issue of VMF’s Strategic Asset Allocation. The market’s first response to this new reality was not careful, nuanced, or discriminating. It was blunt. High-quality software was sold alongside lower quality software. Mission-critical systems were punished alongside fragile point solutions. Embedded workflows were treated as if they were just another feature waiting to be commoditized by the next model release.

But even if the market’s first reaction has been too crude, the underlying signal should not be ignored.

The abundance thesis is not fantasy.

Something very real is happening.

If intelligence-like tasks become cheaper, then certain forms of output can rise dramatically without the usual increase in labor input. More can be produced with fewer hours. More workflows can be automated. More organizations can scale with leaner teams. More experimentation can occur because the cost of trying, building, testing, drafting, and iterating falls.

That is why some of the louder voices in technology have started talking in such radical terms. They are not merely predicting better software margins. They are gesturing toward a world in which intelligence itself begins to look less like a scarce factor of production and more like an abundant utility.

And to be fair, one can see why that idea is so seductive.

If a rising share of cognitive labor can be replicated cheaply, then the immediate first-order consequence should be deflationary pressure in the things most exposed to that replication.

Certain services should become cheaper to deliver.

Certain functions should become less labor-hungry.

Certain software layers should become easier to build.

Certain bottlenecks that once supported pricing power may begin to weaken.

In that sense, the abundance camp is not wrong to say that AI carries a strongly deflationary impulse.

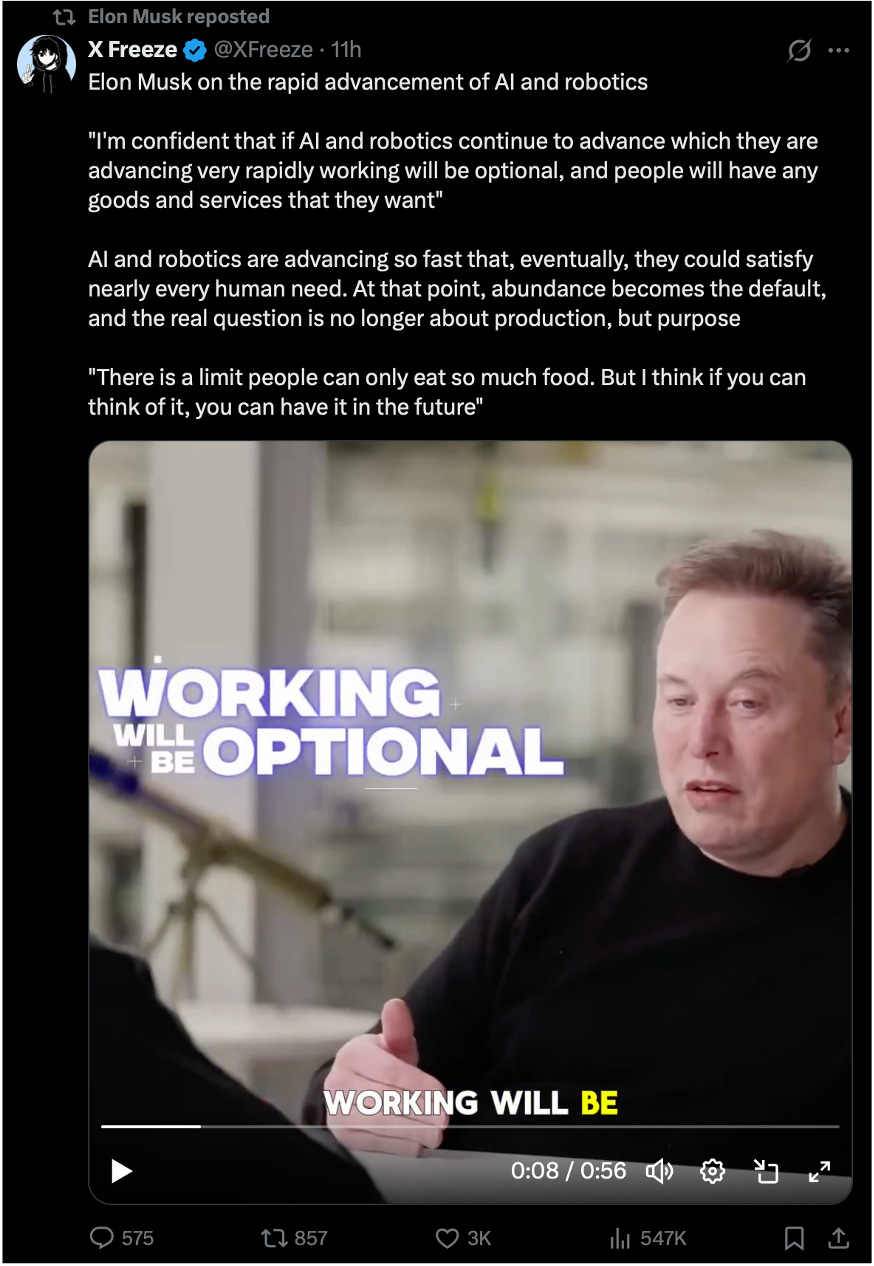

That is why Elon Musk is talking this way. His claim is not merely that AI will make the economy more productive. It is that AI and robotics will make abundance so great that work could become “optional,” money could become “irrelevant,” and government-funded “universal high income1” could offset AI-driven unemployment

without igniting inflation.

That is not just a technological forecast.

It is a civilizational claim about abundance. But beneath the utopian phrasing lies a harder implication: “work will be optional” may simply be a euphemism for large-scale labor displacement.

And Musk is not alone. Sam Altman (OpenAI) has long argued that sufficiently powerful AI could drive the price of many kinds of labor toward zero, radically lower the cost of goods and services, and force a redesign of how technological wealth is distributed across society.

His answer has not been a defense of the old wage system, but a search for broader mechanisms of distribution, including an “American Equity Fund2” and annual citizen payouts tied to capital ownership...

This is entirely consistent with what we highlighted in February’s issue of VMF’s Strategic Asset Allocation and revisited again in April: the possibility of a step change in economic growth, with AI-led productivity gains allowing output to rise much faster than labor input, lowering unit costs even as activity expands.

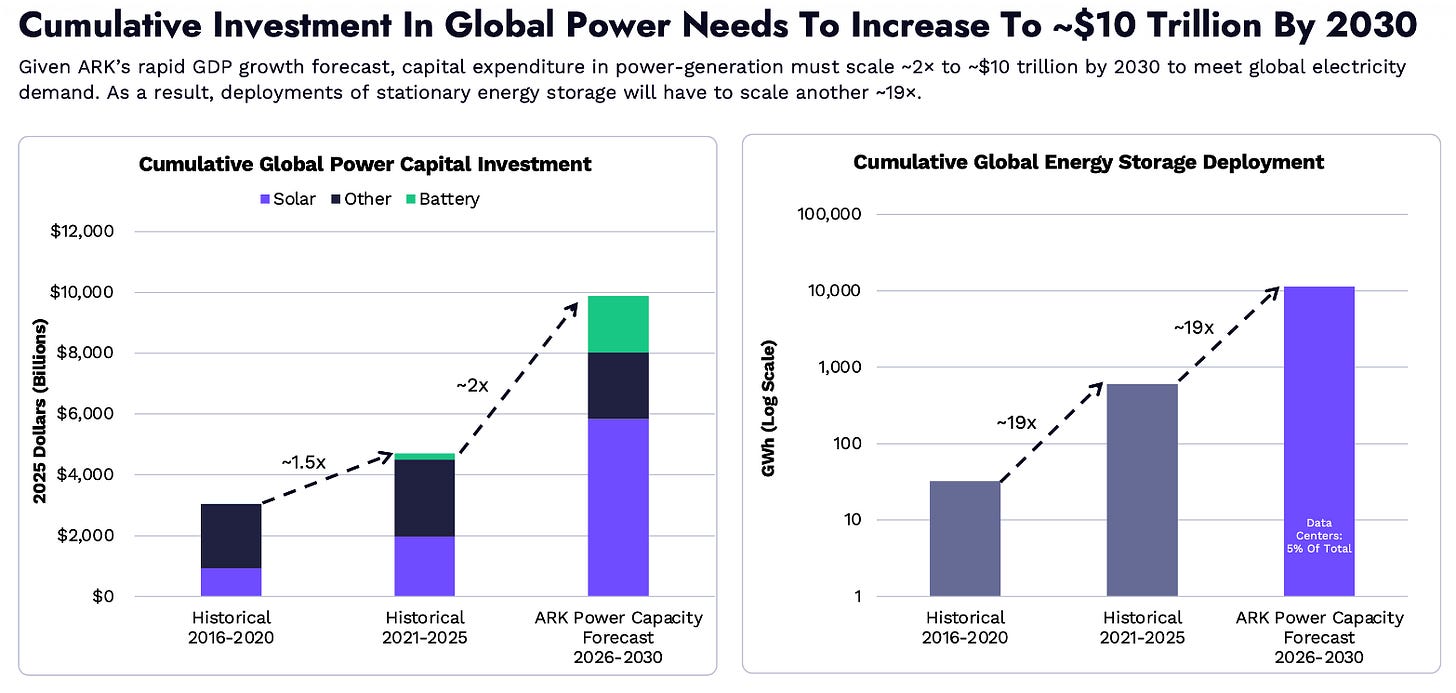

Cathie Wood of ARK Invest now packages this vision as “The Great Acceleration,” arguing that AI and related technologies could drive one of the largest investment booms in history and materially reshape growth by the end of the decade.

More output with fewer inputs. That is the prosperity case.

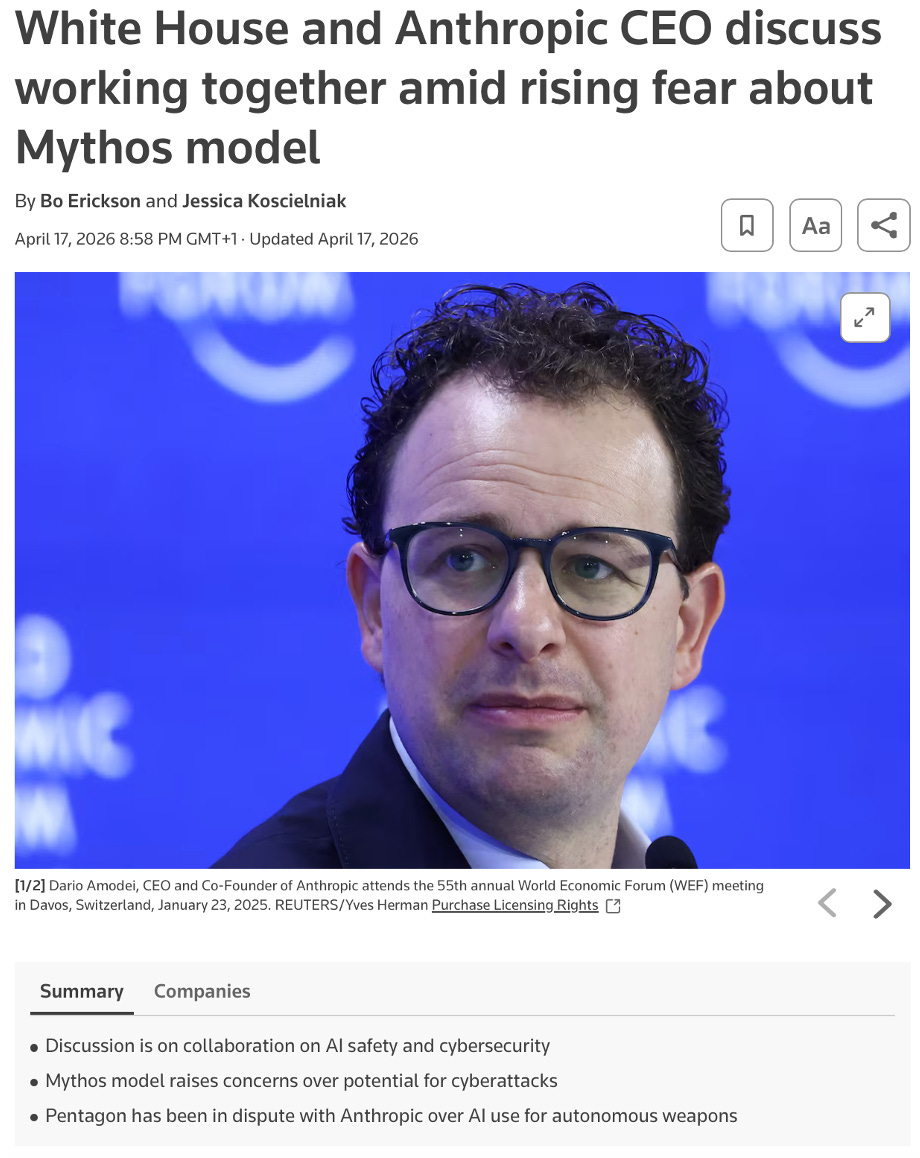

Dario Amodei (Anthropic) takes the argument further still. He has described the arrival of powerful AI as the entry of “a country of geniuses in a datacenter” into the global economy, and he believes systems of that kind may be much closer than most people think.

Anthropic’s new Mythos model helps explain why the mood around the frontier is becoming more charged by the month...

Presented as a watershed model because of its unusual capabilities in computer security tasks, Mythos is also being released through the tightly controlled Project Glasswing initiative, reflecting concern that it could accelerate the discovery and exploitation of cybersecurity vulnerabilities.

In other words, the people closest to the frontier are not merely selling upside. They are also signaling that the capability jump is becoming unsettling even for them.

We could keep going.

But the broader pattern matters more than the personalities. They are all, in one way or another, talking their own book. That does not make them wrong. But it does mean we should listen with interest, not surrender.

Amara’s Law3 remains useful here: investors tend to overestimate the effect of a new technology in the short run and underestimate it in the long run. That warning cuts both ways. It warns against breathless extrapolation. But it also warns against complacency.

And on that point, we should be clear: we do not believe this abundance wave is merely coming. We believe it is already here.

But this is also where the abundance thesis often becomes lazy. It takes one true statement... that some forms of intelligence are becoming cheaper... and quietly stretches it into a much larger and much weaker conclusion:

that the whole system is heading toward cheapness.

We do not think that leap holds.

A model may draft the memo, summarize the report, clean the dataset, write the code, or assist with the analysis, but none of that means the broader system in which those capabilities operate suddenly becomes frictionless or inexpensive.

The model still needs electricity. It still needs compute. It still needs data. It still needs legal permission to use certain inputs. It still needs to be embedded into a workflow, supervised by someone accountable when the output is wrong, and distributed through a trusted system.

Most importantly, it still needs to operate inside a political order that will decide how the gains are shared and how the disruption is absorbed. That is the distinction the abundance camp too often blurs. Cheaper cognition is not the same thing as a cheaper system. This is also why the more utopian versions of the story deserve to be treated with some caution...

Take the now fashionable claim that work may become “optional.” Even if we grant the direction of travel, the economic question does not disappear. It becomes harder:

Optional for whom?

If firms can produce more with fewer workers, do prices simply fall across the economy?

Do wages keep pace?

Do displaced workers move smoothly into better and higher-value roles?

Or does the political system do what political systems usually do when productive change becomes socially destabilizing and intervene?

That last question matters far more than the abundance camp tends to admit.

Technology is never introduced into a vacuum. It arrives inside a social order...

And our social order is not entering this transition from a position of calm. It is entering it with debt burdens already high, fiscal deficits already large, trust already frayed, politics already polarized, and an electorate already highly sensitive to inequality, cost of living, and institutional failure.

That matters even more if, as we have argued, we are now in the final stretch of a Fourth Turning.

Seen through that lens, today’s rhetoric around optional work, universal basic income, wealth transfers, and broad societal redesign may be doing more than advertising technological abundance. It may be offering a first sketch of the order that could follow this crisis. A glimpse, perhaps, of the next First Turning trying to imagine itself before the current Fourth Turning has fully run its course.

But that is precisely the point: before renewal comes resolution, and before the next order is built, this one still has to break.

In that kind of environment, abundance is unlikely to be processed neatly. It is far more likely to be politicized. That means transfers. That means subsidies. That means industrial policy. That means demands for regulation. That means fights over ownership, access, compensation, copyright, and control.

In other words, even if AI lowers the cost of certain tasks, the transition costs may still be socialized. Even if software becomes easier and cheaper to build, the infrastructure required to run the new system may become more expensive. Even if some services become dramatically more efficient, the political response to displacement may involve exactly the kind of redistribution, fiscal support, and institutional improvisation that keeps monetary pressure alive elsewhere.

That is why we are not willing to reduce this moment to a sterile inflation-versus deflation debate.

The better way to think about it is this:

AI is likely to be deflationary in what it replicates, inflationary in what it cannot replace, and politically explosive in how it distributes the gains.

Once you see that, the abundance story looks very different. Not like a clean glide path toward universal cheapness, but like a messy regime shift in which one layer of the economy becomes more abundant while another becomes more bottlenecked, more contested, and, therefore, more valuable.

Some things may indeed get cheaper. But the complements around them may become more important, not less: power, grid capacity, premium compute, trusted data, copyrighted inputs, regulatory clearance, secure distribution, compliance infrastructure, authenticated brands, and deeply embedded systems.

Abundance in one layer, in other words, does not eliminate scarcity in another. It can intensify it.

And that is the point the market still seems reluctant to price properly. When a powerful new technology lowers the cost of one input, capital does not stop caring about scarcity...

It goes looking for the new scarcity.

That, in our view, is exactly what is happening now. So yes, intelligence may be getting cheaper. But that does not mean the Debasement Trade dies here. It means it evolves. And it means the market is asking the wrong question.

The real one is harder, and far more investable: when intelligence gets cheap, what becomes expensive?

Universal Basic Income (UBI) is a policy under which the state pays all residents a regular cash amount, unconditionally and regardless of employment status, with the aim of creating a basic financial floor beneath society. In other words, UBI is designed to guarantee subsistence, not affluence. Musk’s more recent idea of “Universal High Income” (UHI) can be understood as a more radical, abundance-era variant of the same concept. Instead of merely ensuring that basic needs are met, UHI assumes that AI and robotics will make goods and services so abundant that governments could distribute much larger income transfers without necessarily generating inflation. So, the clean way to reconcile the two is this: UBI is a universal floor; UHI is Musk’s vision of a much higher universal standard of living made possible by extreme automation and productivity gains. Whether that future proves economically feasible is another question entirely.

Sam Altman’s “American Equity Fund” is a proposal to distribute the gains from an AI-driven economy by giving every adult American a direct stake in the country’s capital base, not just a cash handout. In Altman’s framework, the Fund would be capitalized by taxing large companies 2.5% of their market value each year, payable in shares, and taxing privately held land 2.5% of its value, payable in dollars. Those proceeds would then be distributed annually to all U.S. adults in a mix of cash and company shares. The key idea is that, as AI shifts value creation away from labor and toward capital, a traditional wagebased social contract becomes less sufficient. Altman’s answer is not simply more income redistribution after the fact, but broader ownership of the assets likely to capture the gains from AI and rising land values. In his own logic, this would make capitalism more inclusive by turning citizens into equity holders in national value creation, rather than leaving most of the upside concentrated in companies and landowners. So, the cleanest way to understand the proposal is this: UBI gives people income; the American Equity Fund aims to give them ownership.

Amara’s Law is the observation that we tend to overestimate the impact of a technology in the short run and underestimate it in the long run. The idea, associated with futurist Roy Amara and still used by the Institute for the Future, is useful because it captures the strange rhythm of technological change: excitement usually runs ahead of reality at first, but once the infrastructure, habits, and business models finally catch up, the longterm transformation often proves much larger than the early hype ever imagined. That is why Amara’s Law fits so neatly with Bill Gates’s well-known warning that we “always overestimate the change that will occur in the next two years and underestimate the change that will occur in the next ten.”